Postico 1.5.1 Download

Apache Spark is a fast and general-purpose cluster computing system.It provides high-level APIs in Java, Scala, Python and R,and an optimized engine that supports general execution graphs.It also supports a rich set of higher-level tools including Spark SQL for SQL and structured data processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming.

Get Spark from the downloads page of the project website. This documentation is for Spark version 1.5.1. Spark uses Hadoop’s client libraries for HDFS and YARN. Downloads are pre-packaged for a handful of popular Hadoop versions.Users can also download a “Hadoop free” binary and run Spark with any Hadoop versionby augmenting Spark’s classpath.

If you’d like to build Spark from source, visit Building Spark.

Version reviewed: 1.5.1 MozBackup Publisher's Description MozBackup is a simple utility for creating backups of Mozilla Firefox, Mozilla Thunderbird, Mozilla Sunbird, Flock and SeaMonkey profiles.It allows you to backup and restore bookmarks, mail, contacts, history, extensions, cache etc. OldVersion.com Points System. When you upload software to oldversion.com you get rewarded by points. For every field that is filled out correctly, points will be rewarded, some fields are optional but the more you provide the more you will get rewarded! So why not upload a peice software today, share with others and get rewarded!

Spark runs on both Windows and UNIX-like systems (e.g. Linux, Mac OS). It’s easy to runlocally on one machine — all you need is to have java installed on your system PATH,or the JAVA_HOME environment variable pointing to a Java installation.

Spark runs on Java 7+, Python 2.6+ and R 3.1+. For the Scala API, Spark 1.5.1 usesScala 2.10. You will need to use a compatible Scala version (2.10.x).

Spark comes with several sample programs. Scala, Java, Python and R examples are in theexamples/src/main directory. To run one of the Java or Scala sample programs, usebin/run-example <class> [params] in the top-level Spark directory. (Behind the scenes, thisinvokes the more generalspark-submit script forlaunching applications). For example,

You can also run Spark interactively through a modified version of the Scala shell. This is agreat way to learn the framework.

The --master option specifies themaster URL for a distributed cluster, or local to runlocally with one thread, or local[N] to run locally with N threads. You should start by usinglocal for testing. For a full list of options, run Spark shell with the --help option.

Spark also provides a Python API. To run Spark interactively in a Python interpreter, usebin/pyspark:

Example applications are also provided in Python. For example,

Spark also provides an experimental R API since 1.4 (only DataFrames APIs included).To run Spark interactively in a R interpreter, use bin/sparkR:

Example applications are also provided in R. For example,

The Spark cluster mode overview explains the key concepts in running on a cluster.Spark can run both by itself, or over several existing cluster managers. It currently provides severaloptions for deployment:

- Amazon EC2: our EC2 scripts let you launch a cluster in about 5 minutes

- Standalone Deploy Mode: simplest way to deploy Spark on a private cluster

Programming Guides:

- Quick Start: a quick introduction to the Spark API; start here!

- Spark Programming Guide: detailed overview of Sparkin all supported languages (Scala, Java, Python, R)

- Modules built on Spark:

- Spark Streaming: processing real-time data streams

- Spark SQL and DataFrames: support for structured data and relational queries

- MLlib: built-in machine learning library

- GraphX: Spark’s new API for graph processing

- Bagel (Pregel on Spark): older, simple graph processing model

API Docs:

Deployment Guides:

- Cluster Overview: overview of concepts and components when running on a cluster

- Submitting Applications: packaging and deploying applications

- Deployment modes:

- Amazon EC2: scripts that let you launch a cluster on EC2 in about 5 minutes

- Standalone Deploy Mode: launch a standalone cluster quickly without a third-party cluster manager

- Mesos: deploy a private cluster using Apache Mesos

- YARN: deploy Spark on top of Hadoop NextGen (YARN)

Other Documents:

- Configuration: customize Spark via its configuration system

- Monitoring: track the behavior of your applications

- Tuning Guide: best practices to optimize performance and memory use

- Job Scheduling: scheduling resources across and within Spark applications

- Security: Spark security support

- Hardware Provisioning: recommendations for cluster hardware

- 3rd Party Hadoop Distributions: using common Hadoop distributions

- Integration with other storage systems:

- Building Spark: build Spark using the Maven system

- Supplemental Projects: related third party Spark projects

External Resources:

- Spark Community resources, including local meetups

- Mailing Lists: ask questions about Spark here

- AMP Camps: a series of training camps at UC Berkeley that featured talks andexercises about Spark, Spark Streaming, Mesos, and more. Videos,slides and exercises areavailable online for free.

- Code Examples: more are also available in the

examplessubfolder of Spark (Scala, Java, Python, R)

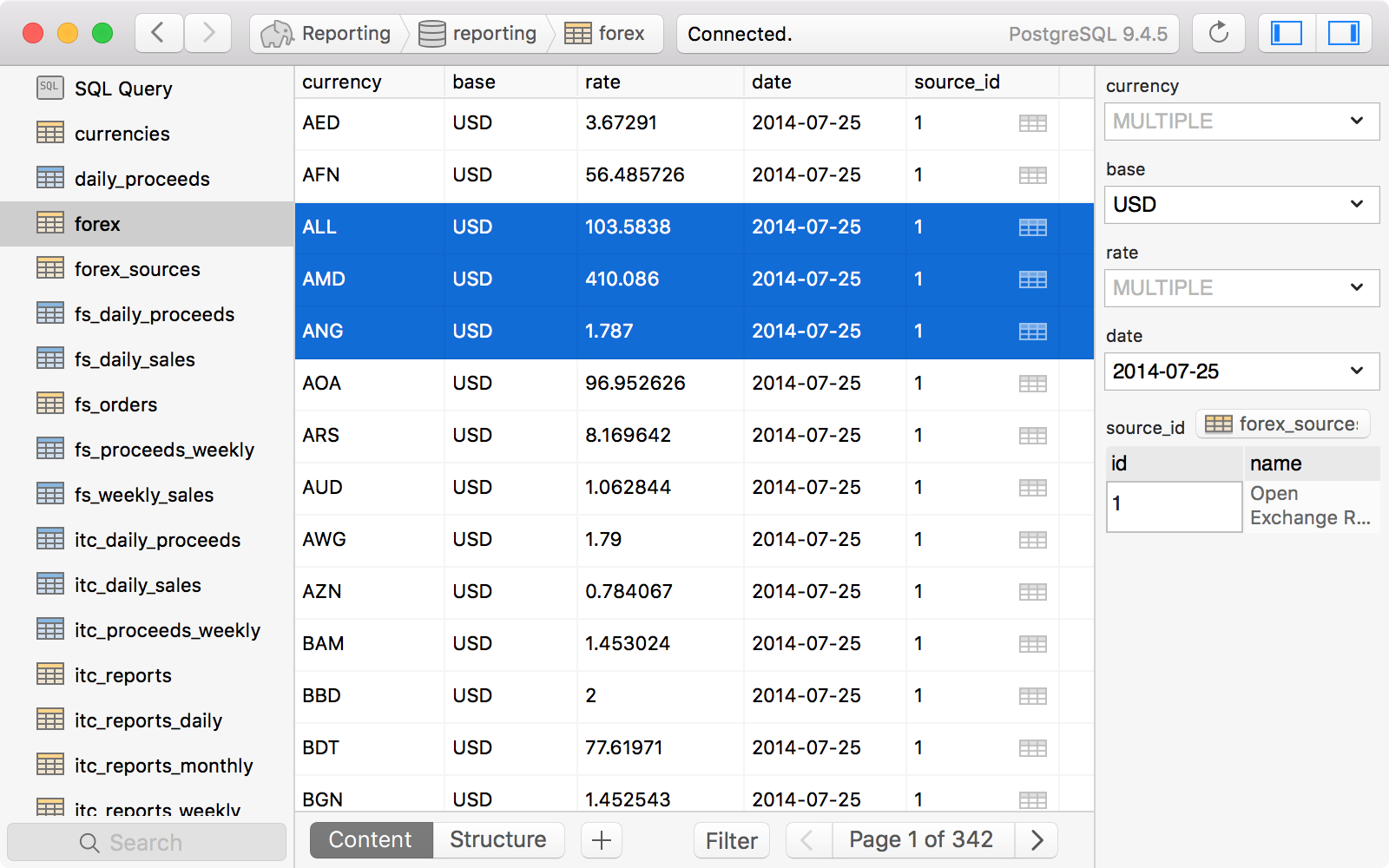

Postico 1.5.1

Description

Postico is a modern database app for your Mac.

Postico is the perfect tool for data entry, analytics, and application development.

– connect to Postgres.app

– connect to PostgreSQL 8.0, 8.1, 8.2, 8.4, 9.0, 9.1, 9.2, 9.3, 9.4, 9.5 and 9.6 servers

– connect to cloud services like Heroku Postgres, Amazon Redshift, Amazon RDS

– connect to other RDBMs that use the PostgreSQL protocol, like CockroachDB

Postico is the perfect app for managing your data. It has great tools for data entry. Filter rows that contain a search term, or set up advanced filters with multiple conditions. Quickly view rows from related tables, and save time by editing multiple rows at once.

For analytics workloads, Postico has a powerful query editor with syntax highlighting and many advanced text editing features. Execute multiple queries at once, or execute them one at a time and export results quickly.

For application developers, Postico offers a full featured table designer. Add, rename and remove columns, set default values, and add column constraints (NOT NULL, UNIQUE, CHECK constraints, foreign keys etc.). Document your database by adding comments to every table, view, column, and constraint.

But the best part of Postico is how well it works. Postico is made on a Mac for a Mac. It works great with all your other Mac apps. Use all the usual keyboard shortcuts. Postico gets the basic things like copy/paste just right, and also supports more advanced features like services for text editing.

– added a preference to enable Dark Mode even when the system is using light mode

– improved Scrolling Performance for table views on macOS 10.13 and 10.14

– fixed a number of crashes:

– fixed a crash after double clicking an error symbol

– fixed a crash when opening popovers with high contrast mode enabled

– fixed a crash related to query history navigation

– fixed a crash when exporting some tables to CSV file

– fixed a crash when pressing shift-tab and the cursor was at the end of the text view

– fixed a drawing bug with the execute button

Information

Download Postico for macOS Free Cracked

Download